In the fast-paced world of AI infrastructure, the efficiency of underlying hardware often determines the ceiling of model performance. Meta, with its enormous scale of AI services—from personalized recommendations to generative AI assistants—relies on a diverse fleet of computing hardware: NVIDIA and AMD GPUs, Meta's custom MTIA silicon, and CPUs. To harness this heterogeneous environment, every high-level model operation must be translated into precise, chip-specific instructions known as optimized kernels. Traditionally, this task demanded weeks of manual effort from expert engineers for each new chip generation or model architecture. In this second installment of the Ranking Engineer Agent series, we introduce KernelEvolve, an agentic system that automates kernel authoring and optimization, slashing development time from weeks to hours while delivering double-digit performance gains.

The Scaling Challenge of Kernel Optimization

Meta’s ranking models—integral to services like Ads Ranking—require a vast array of custom operators beyond standard general matrix multiplications (GEMMs) and convolutions. Vendor libraries cover only the most common operations, leaving a long tail of specialized kernels that must be hand‑tuned for each hardware generation. As the number of models multiplies across hardware types (NVIDIA, AMD, MTIA, CPUs), the manual approach becomes unsustainable. Engineers face a combinatorial explosion: every new model × hardware combination demands profiling, optimization, and debugging, a process that consumes weeks of scarce expert time.

This bottleneck threatens to slow innovation. Without a scalable solution, the performance potential of both new chips and new ML architectures remains locked behind labor‑intensive manual work. The need for an automated system capable of exploring thousands of kernel variants is clear.

Introducing KernelEvolve: An Agentic Approach

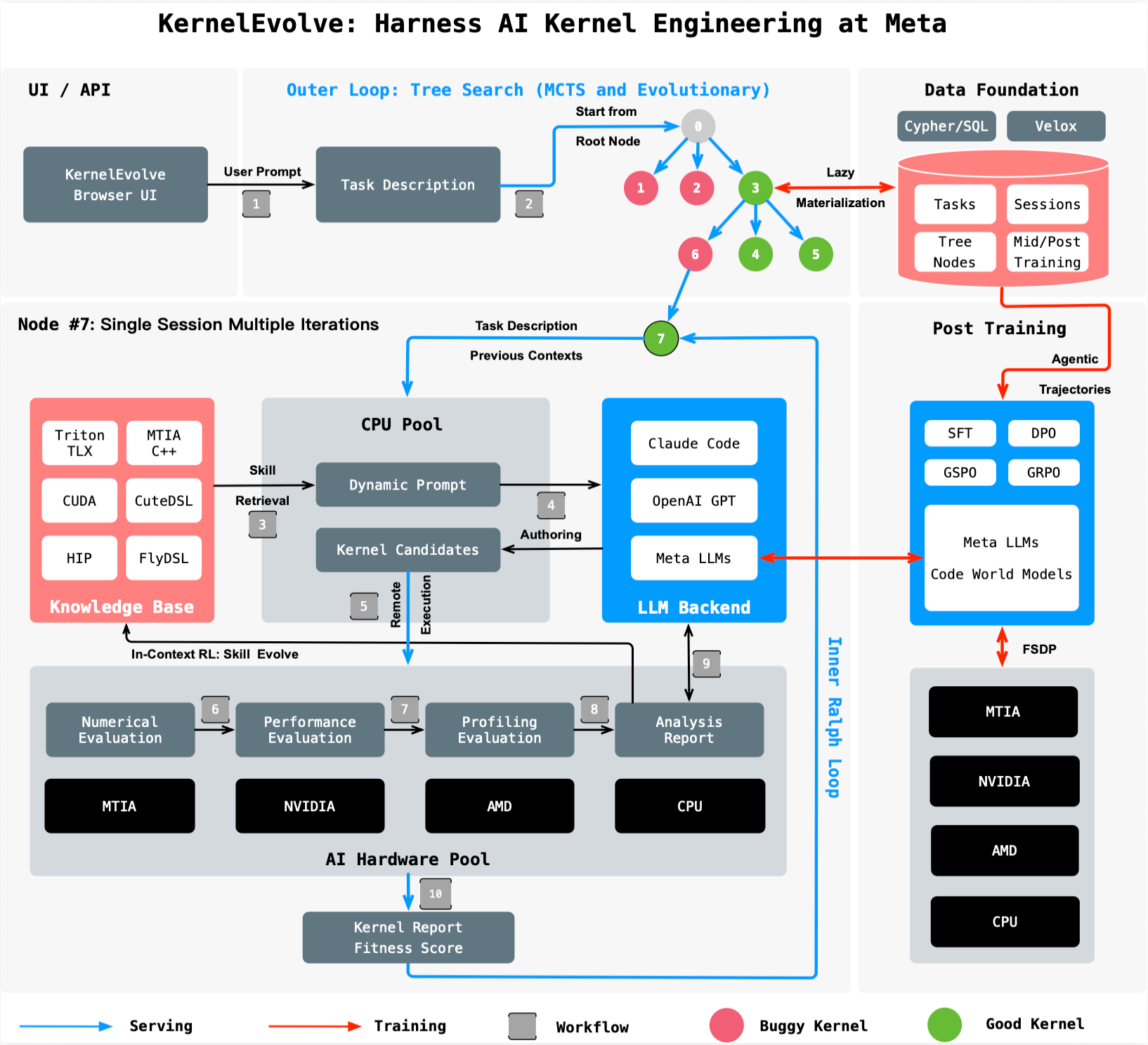

To meet this challenge, Meta built KernelEvolve, a kernel authoring agent integrated with the Ranking Engineer Agent. KernelEvolve reframes optimization as a search problem. A purpose‑built job harness evaluates each candidate kernel on the target hardware, feeds detailed diagnostics back to a large language model (LLM), and drives a continuous search over hundreds of alternatives. This closed‑loop process mimics the iterative refinement of a human expert but operates at machine speed and scale.

How It Works

The agent begins with a high‑level description of the desired operator, expressed in a domain‑specific language (DSL) such as Triton, Cute DSL, or FlyDSL, or directly in low‑level code (CUDA, HIP, or MTIA C++). It then generates multiple candidate implementations, runs them through the harness, gathers performance counters, and uses the LLM to suggest modifications. Over successive iterations, KernelEvolve converges on kernels that outperform human‑written counterparts. The system is generic: it adapts to any hardware and any operator, automatically tuning for memory access patterns, parallelism, and instruction selection.

Measured Performance Gains

KernelEvolve delivers substantial improvements across Meta’s heterogeneous fleet:

- Inference throughput: For Meta’s Andromeda Ads model on NVIDIA GPUs, KernelEvolve achieved a 60% improvement in inference throughput.

- Training throughput: On Meta’s custom MTIA silicon chips, training throughput for an ads model increased by over 25%.

These results were obtained in a fraction of the time required by manual tuning—compressing weeks into hours. The agent not only matches but often exceeds the performance of kernels crafted by domain experts, demonstrating that automated search can unlock hardware potential that human intuition might miss.

Broad Applicability Across Hardware and Models

KernelEvolve is not limited to a single chip or model family. It supports public hardware from NVIDIA and AMD, as well as Meta’s proprietary MTIA chips and CPUs. The system can generate kernels in high‑level DSLs (Triton, Cute DSL, FlyDSL) for rapid prototyping and in low‑level languages (CUDA, HIP, MTIA C++) for fine‑grained control. This versatility makes it applicable to a wide range of AI models beyond Ads Ranking, including computer vision, natural language processing, and generative AI workloads.

The agent’s ability to optimize across hardware generations also future‑proofs Meta’s infrastructure. As new chips appear, KernelEvolve can immediately begin exploring the kernel space, reducing the time to peak performance for each new platform.

Conclusion and Outlook

KernelEvolve represents a paradigm shift in how large‑scale AI infrastructure is optimized. By treating kernel coding as an agentic search problem, Meta has automated a critical, time‑intensive task that previously required deep human expertise. The system accelerates development, boosts performance by double‑digit percentages, and works across a heterogeneous hardware fleet that is only growing more diverse.

For more technical details, see the paper “KernelEvolve: Scaling Agentic Kernel Coding for Heterogeneous AI Accelerators at Meta”, accepted at the 53rd International Symposium on Computer Architecture (ISCA) 2026. This work is a key component of Meta’s broader Ranking Engineer Agent initiative, which continues to push the boundaries of autonomous AI infrastructure optimization.