Kubernetes initially relied on cgroup v1's CPU shares for resource allocation, but the industry's move to cgroup v2 introduced a new CPU weight system. The conversion from shares to weight, while necessary, brought unexpected problems that impacted workload priorities and fine-grained control. This Q&A explains the old formula, the issues that arose, and the improvements being made.

Why did Kubernetes originally use cgroup v1 CPU shares?

When Kubernetes was designed, cgroup v1 was the standard control group mechanism. It used CPU shares to allocate relative CPU time among processes. The default shares value was 1024, representing equal priority. Kubernetes derived CPU shares from a container's CPU request using the formula: cpu.shares = milliCPU × (1024 / 1000). For example, a container requesting 1000m (1 CPU) got 1024 shares, equal to the default, while a 100m request got only 102 shares. This system worked well within cgroup v1, providing predictable relative priority among workloads.

What changed when moving to cgroup v2?

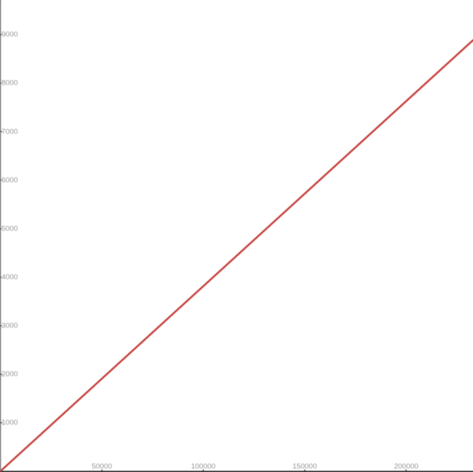

cgroup v2 replaced the CPU shares concept (range 2 to 262144) with CPU weight, which scales from 1 to 10000. This new metric is not directly compatible with the old shares value. To bridge this gap, KEP-2254 introduced a linear conversion formula: cpu.weight = 1 + ((cpu.shares - 2) × 9999) / 262142. This maps the old range onto the new one linearly. However, this seemingly straightforward transformation created significant challenges for Kubernetes workloads.

What was the linear conversion formula and how did it map values?

The formula from KEP-2254 linearly transforms cgroup v1 shares (which range from 21 to 218) into cgroup v2 weight (ranging from 100 to 104). It takes the old shares value, subtracts 2, multiplies by 9999, divides by 262142, and adds 1. While mathematically clean, this linear mapping does not preserve the default priority relationship. For instance, a container with 1024 shares (default v1) converts to only about 39 weight, far below the v2 default of 100. This mismatch is the root of several problems.

What is the first major problem: reduced priority against non-Kubernetes workloads?

In cgroup v1, a container requesting 1 CPU (1000m) got 1024 shares, equal to the system default. This meant Kubernetes workloads had the same priority as other system processes. After conversion to cgroup v2, the same container receives a weight of only about 39—less than 40% of the default 100. As a result, Kubernetes workloads lose priority compared to non-Kubernetes daemons and processes. In resource‑starvation scenarios, this can cause Kubernetes containers to be throttled more aggressively than system services, undermining expected performance isolation.

What is the second major problem: unmanageable granularity for small requests?

The linear formula produces very low weight values for small CPU requests. For example, a container asking for 100m CPU gets just 4 weight—far too low to create meaningful sub‑cgroups within the container. This makes fine‑grained resource distribution almost impossible, especially as future Kubernetes features (like KEP #5474) aim to enable sub‑cgroup configuration. With cgroup v1, the same 100m request gave 102 shares, which was manageable for sub‑grouping. The new conversion thus limits the ability to control CPU allocation at a granular level.

How does this affect Kubernetes workloads in practice?

For typical clusters, the reduced priority means that Kubernetes pods may get less CPU time than expected when system processes are active. This can lead to performance degradation for latency‑sensitive applications. The granularity problem hampers advanced use cases like quality‑of‑service partitioning within a pod. Operators who rely on fine‑tuning CPU distribution inside containers lose the ability to do so effectively. Both issues become more pronounced as clusters grow and as more services run outside Kubernetes' control.

What improvements are being made to the conversion formula?

I'm excited to announce that a new, improved conversion formula is being implemented. While the exact details are still under discussion, the goal is to address both major problems: restore default weight for a 1‑CPU request to roughly match the default (100), and provide higher weight values for small CPU requests to enable sub‑cgroup configuration. The new formula will preserve the intent of CPU requests while avoiding the pitfalls of the linear mapping. This will ensure Kubernetes workloads maintain competitive priority and administrators regain granular control.